Why we believe the cloud needs its own virtual operating system — and CircleOS

History

Over the last few years, Circle has been involved in numerous large-scale web development projects in an advisory and development capacity. It is frightening to see how many developers, job profiles and how much effort it takes to implement such projects.

But even for small in-house developments there are many different technologies and skills required, making it hard to build and run apps at low costs.

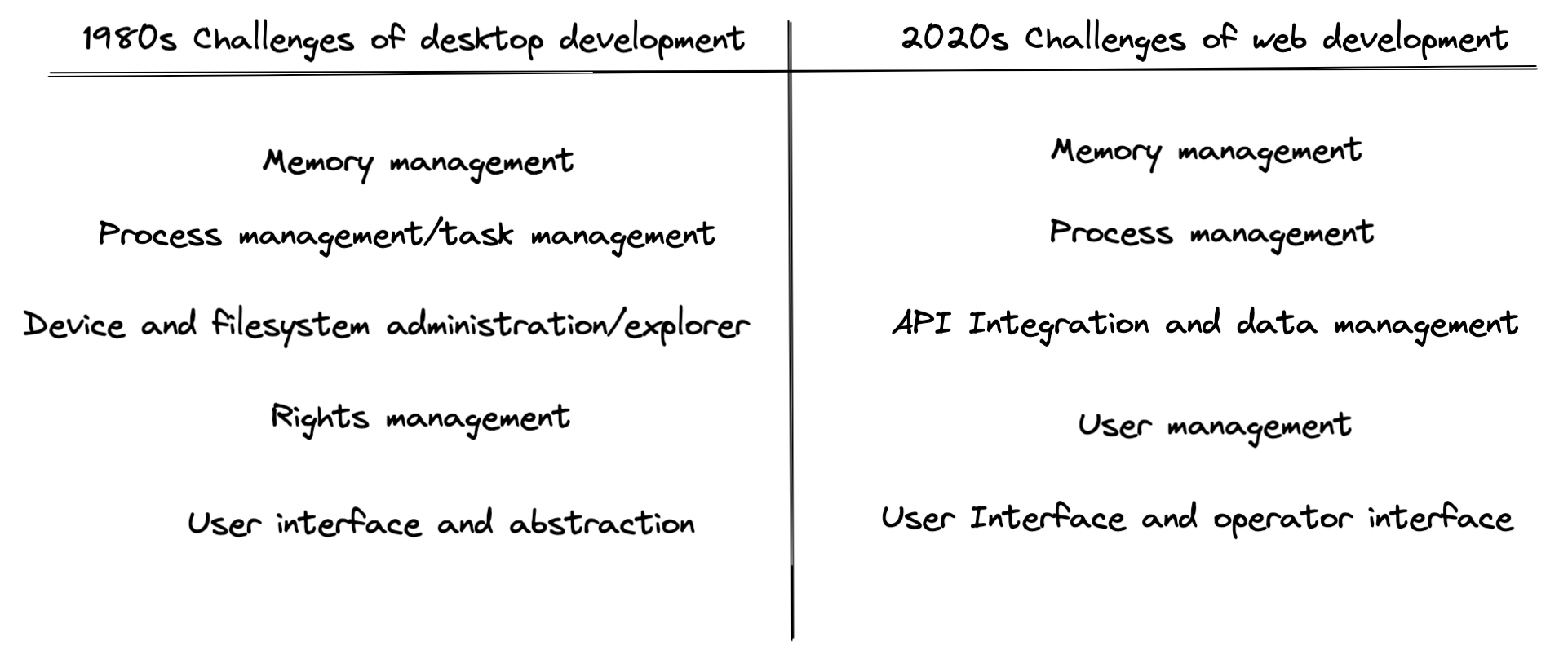

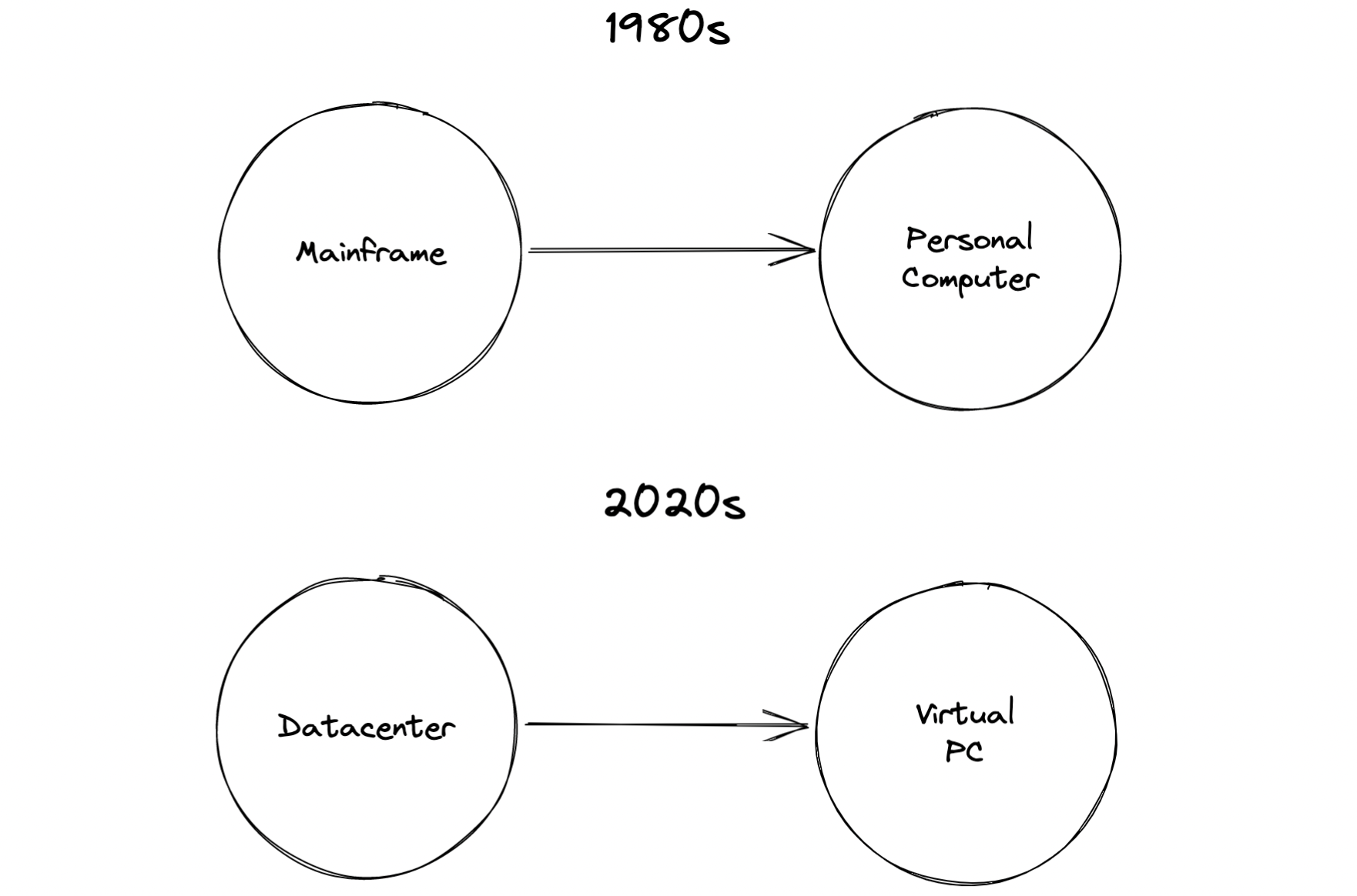

Software development faced a similar situation back in the 80s. At this time mainframes were broadly used to execute programs. Developers brought their programs to the mainframe and executed them which required a team of specialists.

This was solved by personal computers and new kinds of operating systems containing user interfaces and high level programming languages. The operating system creators did not develop all the features themselves, but made use of existing technological puzzle pieces and harmoniously combined them with additional things they created on their own.

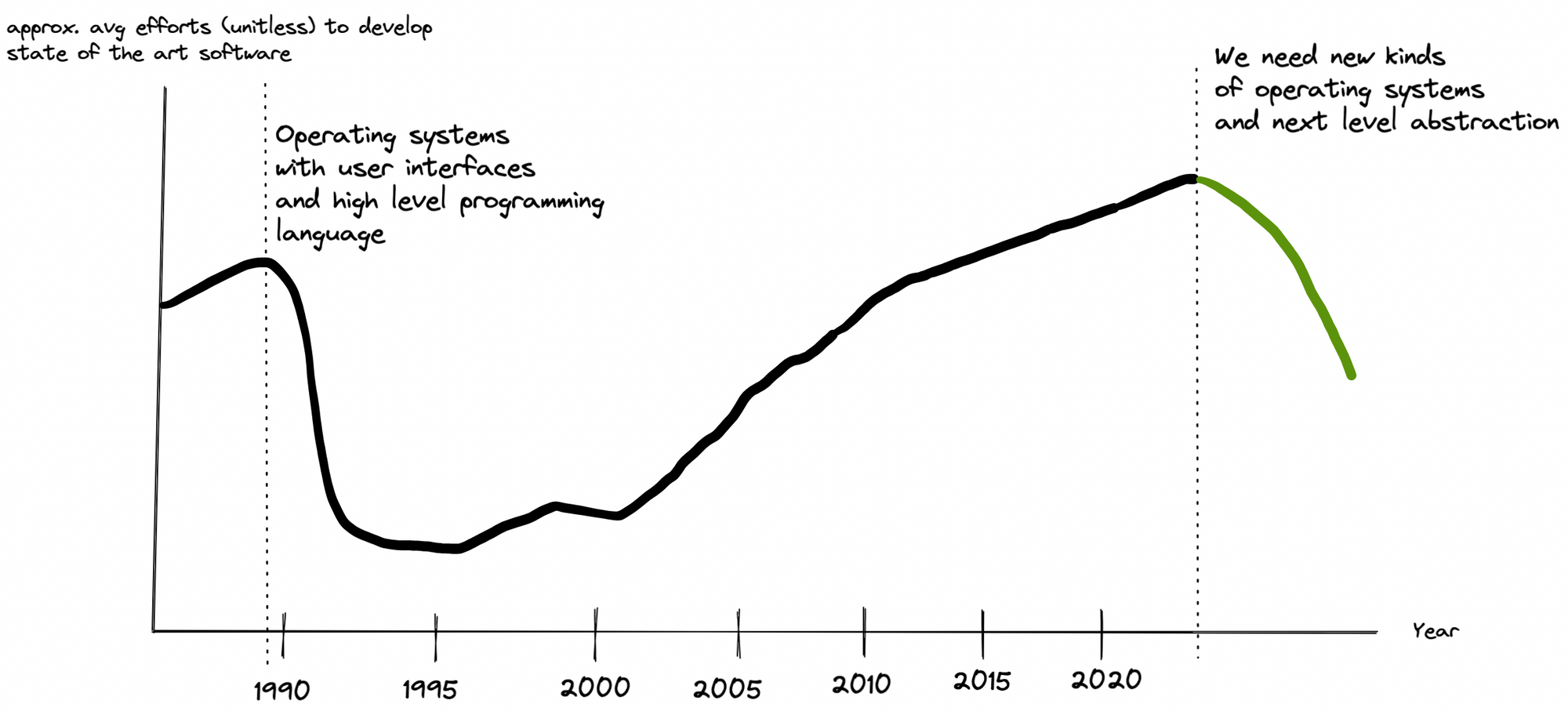

The drawing below illustrates these circumstances in a very simplified way. On the left, we are talking about local applications that became easier to develop with the introduction of the personal computer. Around 2000 to 2005 web applications became the new standard and their requirements grew rapidly. This also increased the efforts in terms of software development and brought back the mainframe situation.

To get some more background we recommend you to read the article from Bob Reselman on devops.com which is called "Mainframes: The Cloud before the Cloud".

Conventional, stable, and productive web applications use a number of technologies, each leading to new job descriptions: Backend Developer, Frontend Developer, DevOps Engineer, Quality Assurance Engineer,… As we all know: The list is much longer.

There are also countless interfaces that do not adhere to any degree of standardization. Today, this leads to large teams working together to develop banal, schematic applications that they need months or even years for.

Whenever software development has been at this point, it has found ways to reinvent itself. And that will be the case again. The next disruption is on the horizon. What will it look like and what will it bring?

Puzzle

It's actually very simple. All the pieces of the puzzle have to be united under one technological roof once again, so that developers can focus on their core competence: developing applications.

But what are these puzzle pieces?

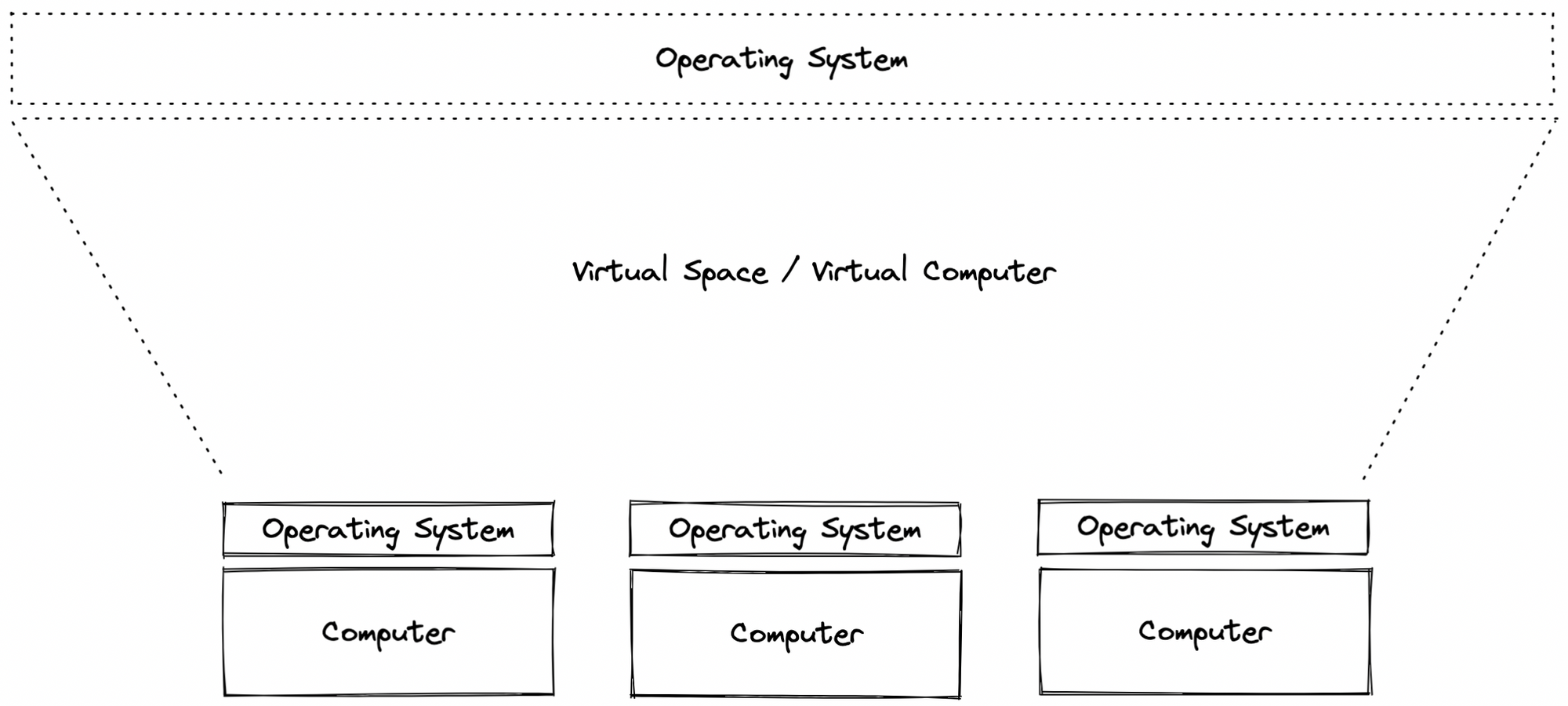

Our table shows that we are facing the same challenges as in the 80s. What we will need is a next generation of operating systems that turn datacenters or in general distributed systems into a single virtual machine, the virtual PC.

Let's take a closer look at the current challenges for today's web applications to find out what the next generation of operating systems needs to provide.

Core architecture (infrastructure perspective)

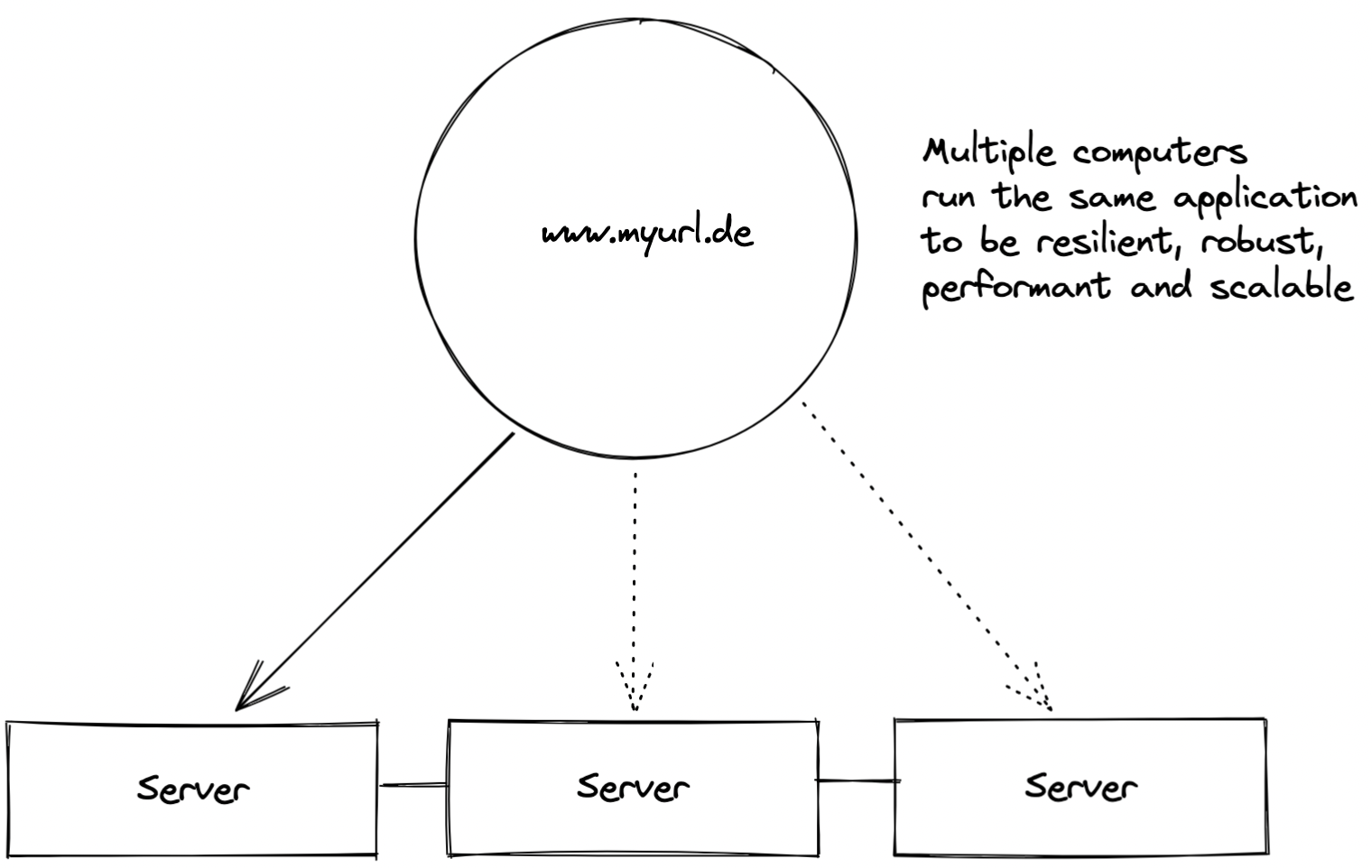

Multiple computers, mostly servers, run the same application or a set of applications so that it cannot break that easily. This is what we call a cluster of computers, which is the usual approach when running professional apps.

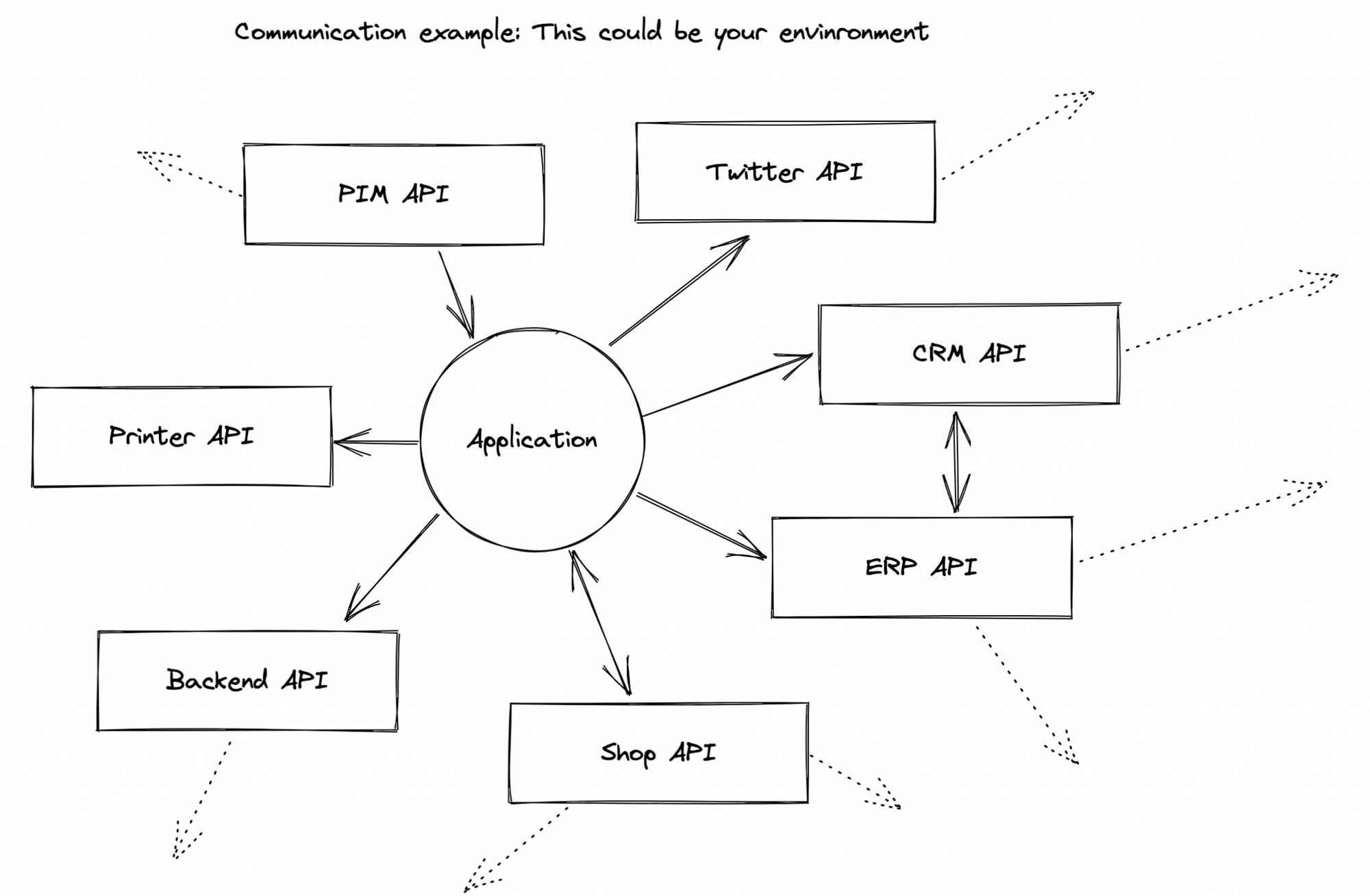

This cluster communicates with other clusters or single computers over APIs. It is in charge of handling integration, access, user management and infrastructure management which lead to the core issues.

Memory management

Every application needs memory. On the PC, memory management ensures that short-term data is stored in faster memory than long-term data, allowing programs to run and files to be stored. The memory of a cluster must also be managed: If three devices provide a total of 8GB RAM, the use of this memory must be organized and divided. The same applies to disk space. Although the devices regulate the specific memory allocation in their main memory or on the disk, they must be told which content is to be stored there.

As long as companies provide a single server to run an application, memory management is still provided by the server operating system. However, as soon as servers form a cluster, it's not clear where to retrieve the data from. There are good tools to handle this, but they have to be learned. Usually, this is a system administrator’s or DevOps engineer’s job – not a job that a developer is able to perform.

Automation leads to fewer requirements on a developer team which is an operating system’s job.

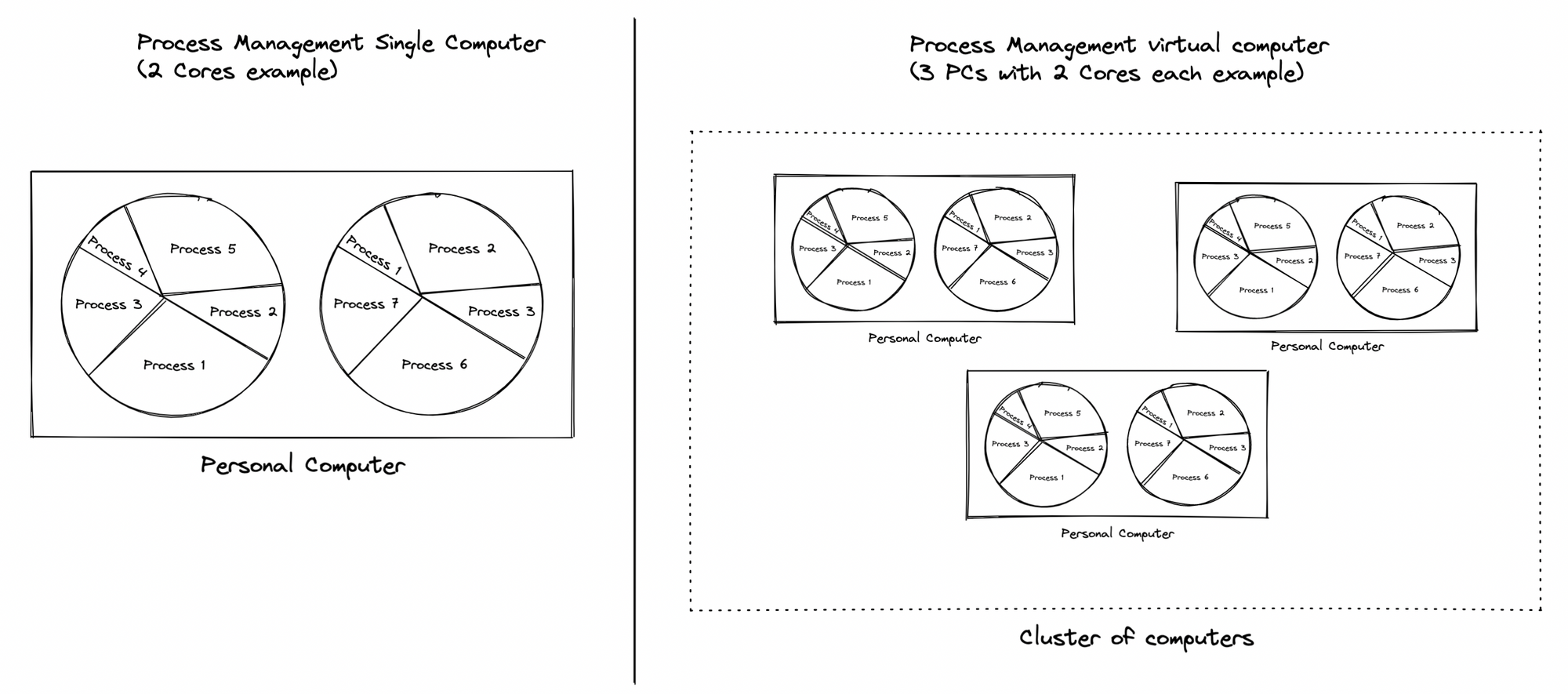

Process management

Processes, i.e. services and applications, require not only memory but also CPU so that they can be executed. The processing unit is limited and therefore an operating system usually schedules processes. If several devices form a cluster, the individual computers still organize their individual CPU, but the clustered sum of CPUs is not managed. This requires a virtual scheduler that schedules the tasks to the appropriate devices in the cluster. It therefore needs to know how much CPU is occupied and free.

There are also good automated solutions to schedule a cluster. Kubernetes is best-known. And again, the puzzle piece already exists. The schedulers of kubernetes still need a lot of improvement, but in general they work.

Currently, the setup and maintenance of such kubernetes clusters is up to DevOps engineers or experienced developers, binding highly skilled people to core tech. We could make use of those clever guys in a smarter way if we had automation through an operating system.

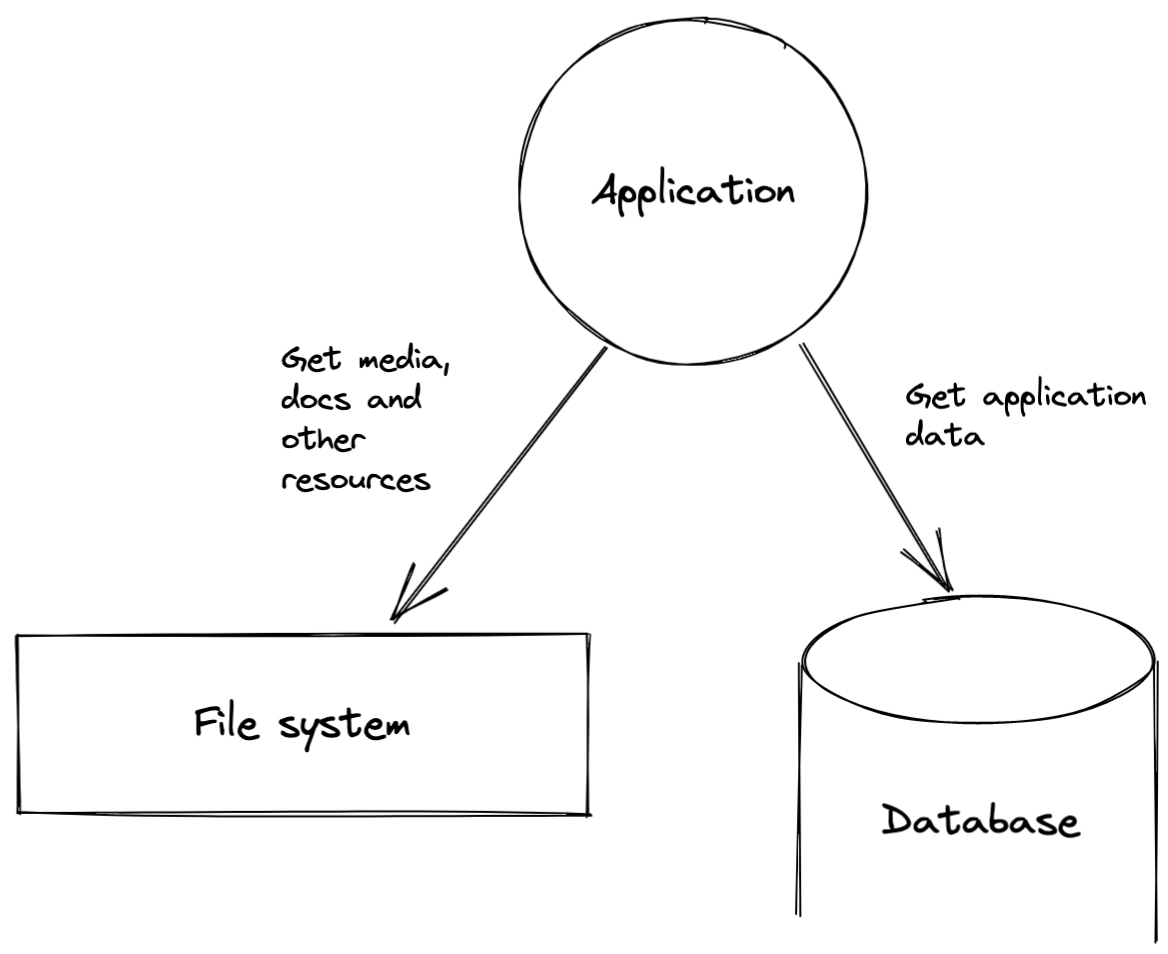

API Integration and data management

The center of the PC is the file system and the associated file explorer. All data and their meta-information are located here, organized according to various criteria. All applications make use of the filesystem. The user can store, find and call up data via the corresponding user interface, the file explorer.

Today's web applications often use hybrid structures to store data, consisting of a real file system for storing large data such as images or videos and a database that contains smaller data.

Good data management is not an easy task: you usually need some know-how for a query language and an understanding of file systems, backups and file system rights. If done properly, data management takes the labor of several people in the company. Typical job profile: Database engineer with proficiency in system administration or experience as DevOps engineer.

If you created a distributed file system with a virtual operating system that does not take this layer into account, you could entrust several people with new tasks and benefit from a robust system that follows common principles without much maintenance effort.

In addition to data management, a device operating system regulates the connection and use of external devices such as printers. In the cloud environment, the periphery is primarily a large number of external interfaces that perform a task – including printing, for example.

However, from the point of view of the web application, it is always an API. If there were standardized ways to connect and provide interfaces without requiring interface development, tied resources would be freed up again.

User management

A PC without a login is unthinkable today. Almost every web application requires some type of login. What initially seems banal at the device level becomes a real challenge in multi-user, multi-app systems:

- Which user may use which function of what app and when?

- Which app is allowed to access what kind of resources?

- What roles contain permissions for what?

- How can all applications authenticate identical users (single sign on)?

Programs such as Keycloak come into play here. They offer a single sign-on via OpenID as well as complex user and authorization concepts. However, setting up and managing Keycloak or any other tool is complex and, if misconfigured, can lead to huge security vulnerabilities. This leaves you in need of an expert, such as a security specialist or an experienced developer or engineer.

An operating system that does this job would be extremely helpful, right?

User Interface and operator interface

The operating system has achieved that the user no longer has to deal with anything that lies behind the GUI. They can use the PC via an interface and software developers can write software using high-level languages. They do not have to deal with binary code. The operating system is a convenience for all user groups because it reduces overall complexity.

In the web environment, however, developers still have to deal with a lot of elements that were abstracted away by the classic operating system: black terminals, internal APIs, recipes, configs, deployment pipelines – to name just a few.

The virtual operating system must therefore encapsulate these areas as best as possible. That way developers do not lose themselves in details that no longer have anything to do with the actual core task, because it bundles an unnecessarily large number of resources.

Conclusion

We see that there are the exact same challenges in the web environment as presented themselves for personal computers back in the 80s. Many pieces of the puzzle, however, we already have. Therefore, it is only logical that for today’s challenges it will once again be an operating system to the rescue. One that bundles complexity and simplifies programming.

Our Solution

A virtual, decentralized OS for the cloud

CircleOS

With CircleOS, we have developed the first virtual, decentralized operating system for web-based systems that fulfills all of the mentioned tasks. While operating systems have so far focused on the device, CircleOS focuses on the network of devices as a virtual unit and creates a virtual computer from it. It does not replace existing operating systems, but uses them as connecting applications to the resources of the devices and interconnects them.

In short: CircleOS provides a decentralized network in which each device can still be used in exactly the same way that it has been used before. It organizes all existing technologies and turns them into a harmonious whole. It further adds the parts that are currently missing, such as a distributed file system.

Let's take a look at the overall design and architecture of CircleOS to see how it tackles the challenge.

The Architecture

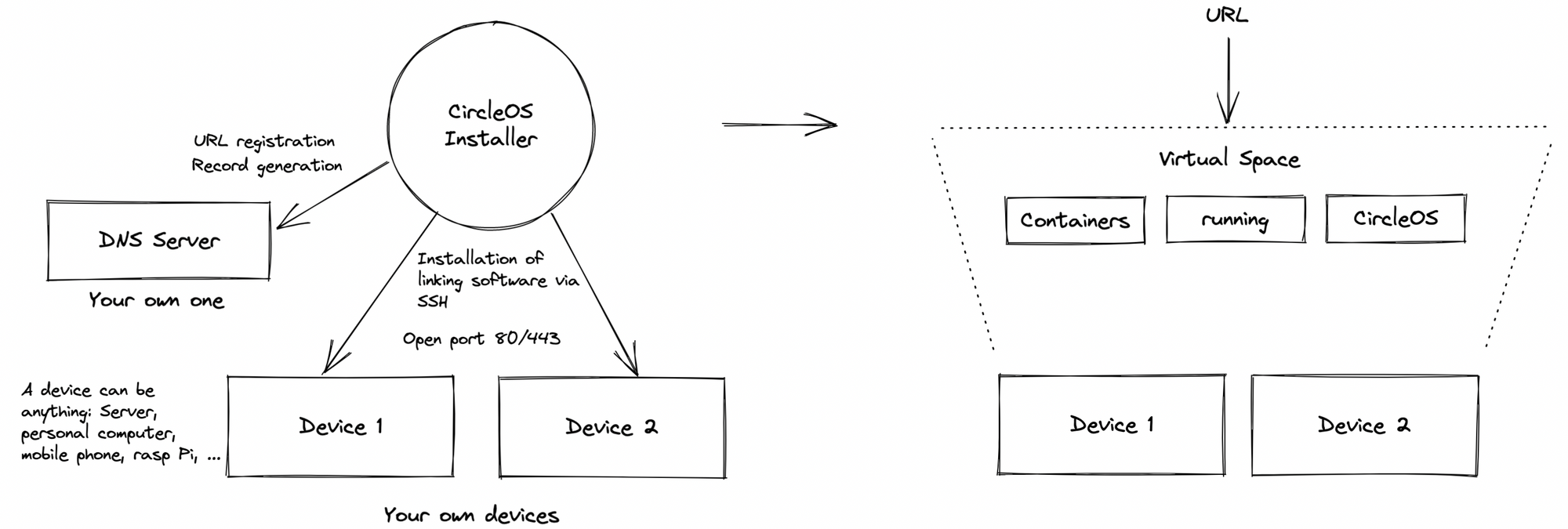

CircleOS is based on physical and/or virtual hardware. When installing, the user selects the devices or the provider that should provide resources for the CircleOS installation. A linking software is installed on each device that interconnects with the other devices in the cluster. It will provide some of the device’s resources in the background. The device itself can still be used regularly.

By connecting multiple devices with each other, a virtual space is created that can be entered through an SSL secured URL. All programs running in this space are containerized.

The devices themselves are not forced to be connected to the internet. It is enough if they can communicate with each other through a few dedicated ports. Conversely, this also means that the entire system can also be installed as an intranet and does not necessarily require the Internet. In this case, the URL will be a local one. The corresponding SSL certificate is either self-signed and must be classified as trustworthy, or an already existing certificate is used.

After the installation, which takes a few minutes, the user can open the specified URL in the browser and will land on a login window. Behind the login there is the desktop, which shows all the apps that can be displayed on the web as icons. Clicking on an icon opens the app, which technically runs on a subdomain.

Disk management, data management and all other tasks are carried out independently by the system. The user can download additional apps via the App Store – this largely includes all apps from Docker Hub – or develop their own apps, which they then publish and install as private applications in their App Store.

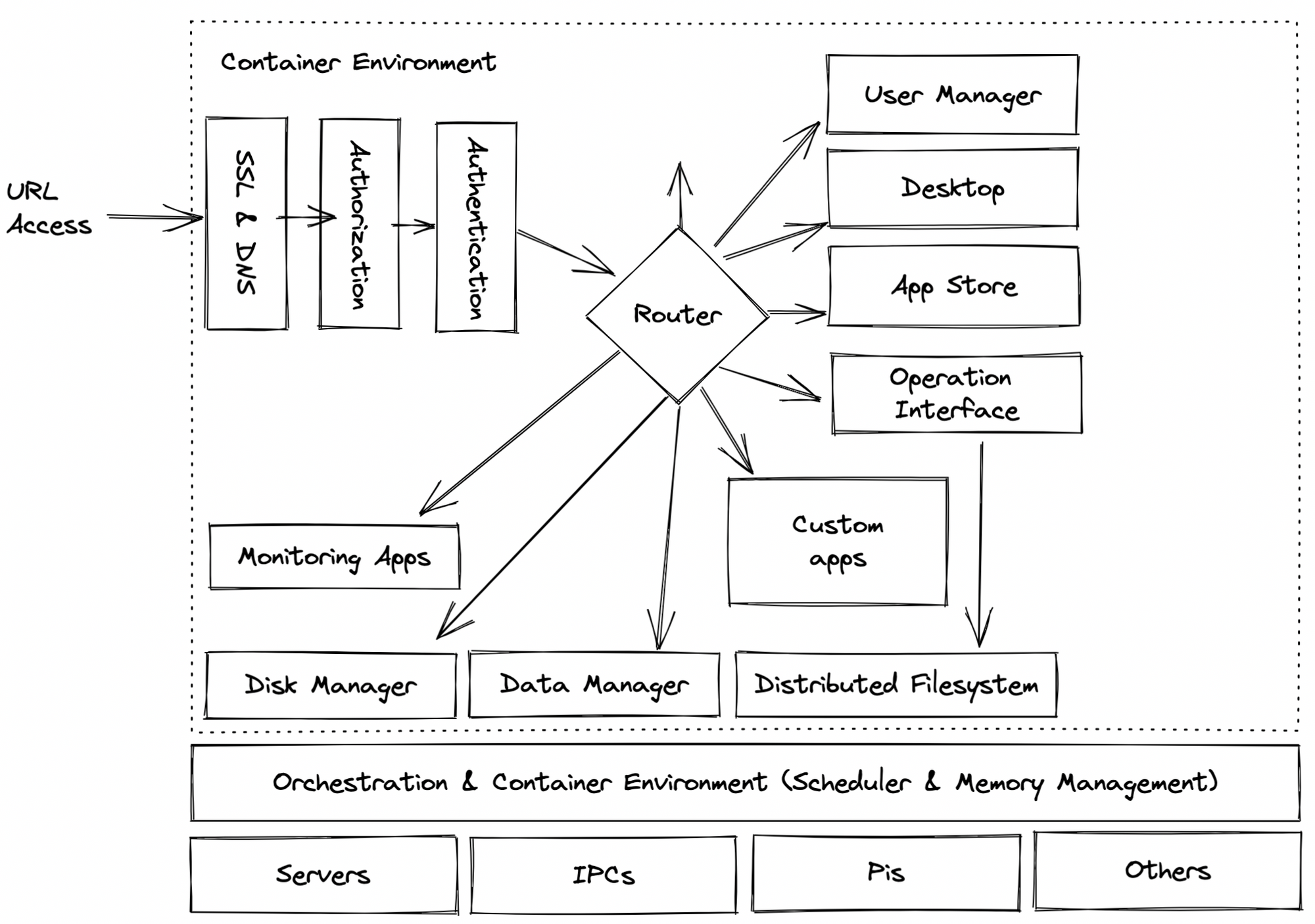

The following image shows in detail what happens within the virtual space.

Developing applications feels the same from developers perspective, but requires less code. Developers can still use a language of their choice, develop in a style of their choice, containerize the application, and publish it. Additionally some config files have to be provided, whose task it is to be able to carry out the publish process at the push of a button without a separate, time-consuming deploy process. Developers no longer have to worry about technical details such as user management or security in general. The app will be embedded in an environment that provides all of this.

CircleOS also offers its own SDK, which can be used to develop applications much faster. We will describe the SDK in detail in a separate blog post.

Now let's take a look at how we solved the technical challenges described before.

In general, we can say: Whenever there was a great and well-known tool solving our problem, we made use of it. We want to provide a system that will feel familiar to you – at least in parts.

Memory management

We've already talked about the fact that while there are good tools for managing storage, they need to be used by professionals. The goal of CircleOS was to use these tools in such a way that, on the one hand, they do the right thing fully automatically while, on the other hand, remaining fully configurable.

We use Longhorn as the basis for this feature. Longhorn is initially configured to expect three replicas for a volume by default. Behavior can be customized via UI or app configuration – additional CircleOS files mentioned in the chapter before. This makes it quite easy to use Longhorn without diving deep into technicalities.

RAM instead is managed by K3S. We are currently working on our own scheduler to increase the performance of RAM and CPU allocation. This scheduler will be based on reinforced learning and will be trained by its own process data. We will let you know when our new scheduler is ready. Test results show that we can use the hardware up to 40% more effectively.

Process management

As mentioned, we use K3S to manage CPU and RAM.

In this context, it is important to mention that CircleOS provides an autoscaler that, if configured, requests additional virtual resources from a provider and removes them when there is a correspondingly high or low load. In this way, we can not only manage the available resources, but also allocate additional resources — an advantage that a single computer does not have. This makes the overall system significantly more stable and yet not exorbitantly expensive, since it also scales down when there is a correspondingly low load. Scaling takes about half a minute. We can still improve here.

API Integration and data management

The file system behind CircleOS has become its most powerful module.

It utilizes the core principle of the FUSE API: "everything is a file“.

All things – apps, processes, structured data, media – are stored as a file and can be modified using classic operations. The data is classified using a specially written type system and mapped into programs. The file system can be addressed via REST API and/or Websocket Connection and is distributed across all nodes of the cluster. This makes it possible for apps to dock to the file system, subscribe to folders and thus receive all information at the time of the change.

A quasi-live system is created in which everything is constantly in sync with each other. The file system can be used as a backend for frontend applications, so there is no need to program a backend at all. It's based on Minio object storage, Postgres Database/Apache Kafka.

CircleOS thereby has its own no-code approach and makes the development of apps much faster and easier because the job of the backend developer, the database engineer and DevOps is much leaner.

User management

From a technical point of view, users are managed via Keycloak. However, we have created a lot of utilities around Keycloak to break down the overall management to users, roles and permissions. The Keycloak UI usually does not have to be used, REALMs do not have to be configured. It is sufficient to create additional permissions and use them accordingly in the apps.

All applications are integrated via OpenID, so there is a single sign-on for all applications – open source as well as specially developed.

It provides great convenience not to have to deal with details anymore and to be able to use a ready-made setup instead. A keycloak specialist is no longer necessary.

User Interface and operator interface

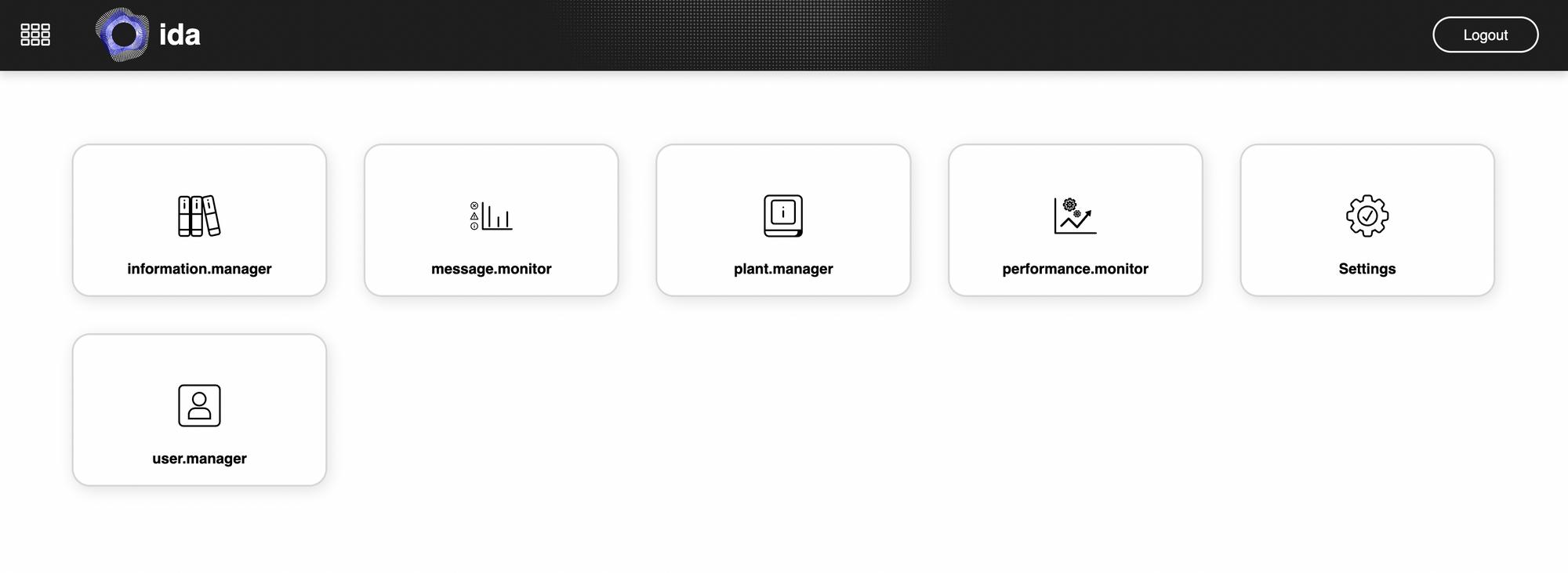

CircleOS comes with a desktop that organizes applications and acts on a start screen. Everything runs in the same tab and runs smoothly. By clicking the home button users can jump back to the desktop from any open application.

The internal services are addressed via the file system, which serves as the operator interface.That way, all applications can be technically controlled through one and the same interface. Developers no longer have to deal with several different interfaces, but only a single one that follows a fixed, comprehensible principle.

Let's have a short look at the user interface of one of our customers.

CircleOS is complete in the sense of an operating system and fulfills all core functions — even from a user interfaces perspective. It is not a product of theory or a research project. It is already being used productively by companies and provides the basis for innovations in mechanical engineering or AI. Our developers use it every day to create state-of-the-art cloud systems.

The disruption

What exactly is the benefit of such an operating system?

Well, that's the ultimate question. There are so many benefits that it's hard for us to boil our answer down to the most essential things. But let's try.

- Around 90% effort saving around core tech todos.

- Around 60% effort saving for web projects in general. We use part of this saving to improve UX and UI.

- Get started quickly. Just install the system and start developing after a few minutes. Installing is a 1-click-command that even requests servers from a provider if configured. You don't have to worry about anything.

- The best developers in the company that are usually bound to core tech issues can devote themselves to tasks that are of more use to the core business.

- Access to all important open source projects at the push of a button.

- Accelerate the advancement of your own technology.

- Become independent of data centers, hardware or providers. Build your decentralized cluster around the globe and protect yourself from crisis.

Just compare using a PC before the operating system and after and think about the benefits. That's what happens to the web world when integrating CircleOS.

Even if you don't find benefits for yourself in this list, if you think that such a system might not be exceptionally needed, you should be reminded on Thomas Watson, President of IBM, who said in 1943:

I think there is a world market for maybe five computers.

- Thomas Watson, President of IBM, 1943

We believe: Private persons as well as companies need a well designed and secure home for their web applications and data.

The future

Our goal is to make CircleOS accessible to more and more companies and people. Access is currently exclusive to our customers. This helps us find out which functions need to be improved from the operator's point of view and which documentation needs to be added.

If you want to take an exclusive look at it today, get in touch with us.

And if you want to help us build this future and drive other important outcomes, hit us up 😉.